[ case study ]

Long-Range Spatial Awareness for Maritime Detection and Avoidance

The Mission:

Support safe, reliable high-speed travel over open water through long-range spatial perception.

The Innovator:

REGENT is building a new class of vehicle: Seagliders—high-speed, wing-in-ground-effect vessels designed to move people and cargo efficiently over coastal waters. Operating just above the surface, these vehicles promise lower emissions and faster regional transportation.

But speed and proximity to the water demand something more than efficiency: they demand confidence in what lies ahead, at speed and at distance.

The Challenge:

Knowing What Lies Ahead in Time to Act

Open water is one of the most difficult environments for reliable perception. The surface is dynamic and reflective, glare and spray reduce contrast, and many hazards—including kayaks, debris, buoys, and people—sit low and emerge late. At high speed, reaction time collapses as stopping distances grow and planning horizons shrink.

Traditional sensing struggles under these conditions:

Radar produces water clutter and ambiguous targets

LiDAR range degrades in spray and mist

Monocular vision cannot measure true distance at long range

For REGENT, the perception system is intended to:

See hundreds of meters to kilometer-scale distances

Detect small, low-profile objects above a dynamic water surface

Measure true physical distance and structure, not appearance

Remain reliable in glare, haze, spray, and low-texture scenes

Operate passively, day and night, without reliance on active emitters

Meeting this requirement means moving beyond detection alone to continuous, long-range measurement of free space and hazards.

The Ally:

NODAR addresses the maritime perception challenge by treating distance as a geometric problem rather than a machine learning task. NODAR’s Hammerhead forms part of REGENT’s broader multi-sensor perception architecture. Hammerhead preserves triangulation accuracy at distance, enabling metric 3D perception using passive cameras rather than active emitters.

Rather than inferring risk from appearance or reflectivity, NODAR reconstructs the physical structure of the scene in real time. The water surface can be modeled as a geometric surface, distant vessels and obstacles emerge as measurable 3D structures, and navigable free space can be continuously quantified ahead of the vehicle.

For maritime autonomy, this enables situational awareness based on geometry—providing the early, reliable spatial understanding required for planning and control at speed.

The Proving Ground:

Testing in Open Water

REGENT integrated NODAR’s Hammerhead perception system to evaluate long-range sensing performance over real water conditions.

The trials exposed the system to the realities of open water: glare, low contrast, dynamic surfaces, and small, low-profile objects at distance. Throughout testing, Hammerhead continuously reconstructed the water surface in 3D while isolating distant vessels and hazards as measurable spatial objects—even when they appeared very small in the image.

By measuring distance directly, Hammerhead enabled early awareness, extending the planning horizon and supporting confident decision-making at speed.

The Technical

Transformation:

From Detection to Understanding

By reconstructing the scene in 3D at long range, Hammerhead provides actionable information that planning systems can rely on:

Long-Range Hazard Detection - Small craft and obstacles are detected hundreds of meters to ~1 km ahead, even when they appear very small in the image.

True Distance Measurement - Physical distance and geometry are derived through stereoscopic triangulation, rather than inferred from reflectivity, appearance, or learned priors.

Reliable Over Water - Stereo geometry remains effective over low-texture, reflective surfaces, where many sensing modalities can experience degradation.

Day and Night Operation - Passive sensing performs in glare, haze, and low light, without reliance on active emitters.

Continuous Free-Space Awareness - The water surface can be continuously modeled in real time, enabling confident planning at speed.

The result is not a collection of detections, but a spatial model of the environment that supports safe, high-speed operation.

The Takeaway:

For teams building autonomy on water, the challenge is determining where free space ends and risk begins—far enough ahead to matter.

REGENT’s impressive work demonstrates that NODAR’s long-range, passive stereo vision can help provide the understanding required for safe, reliable maritime transportation, complementing existing sensing modalities in conditions where radar clutter or LiDAR degradation can occur.

This moves perception beyond isolated warnings toward continuous spatial understanding.

The Data:

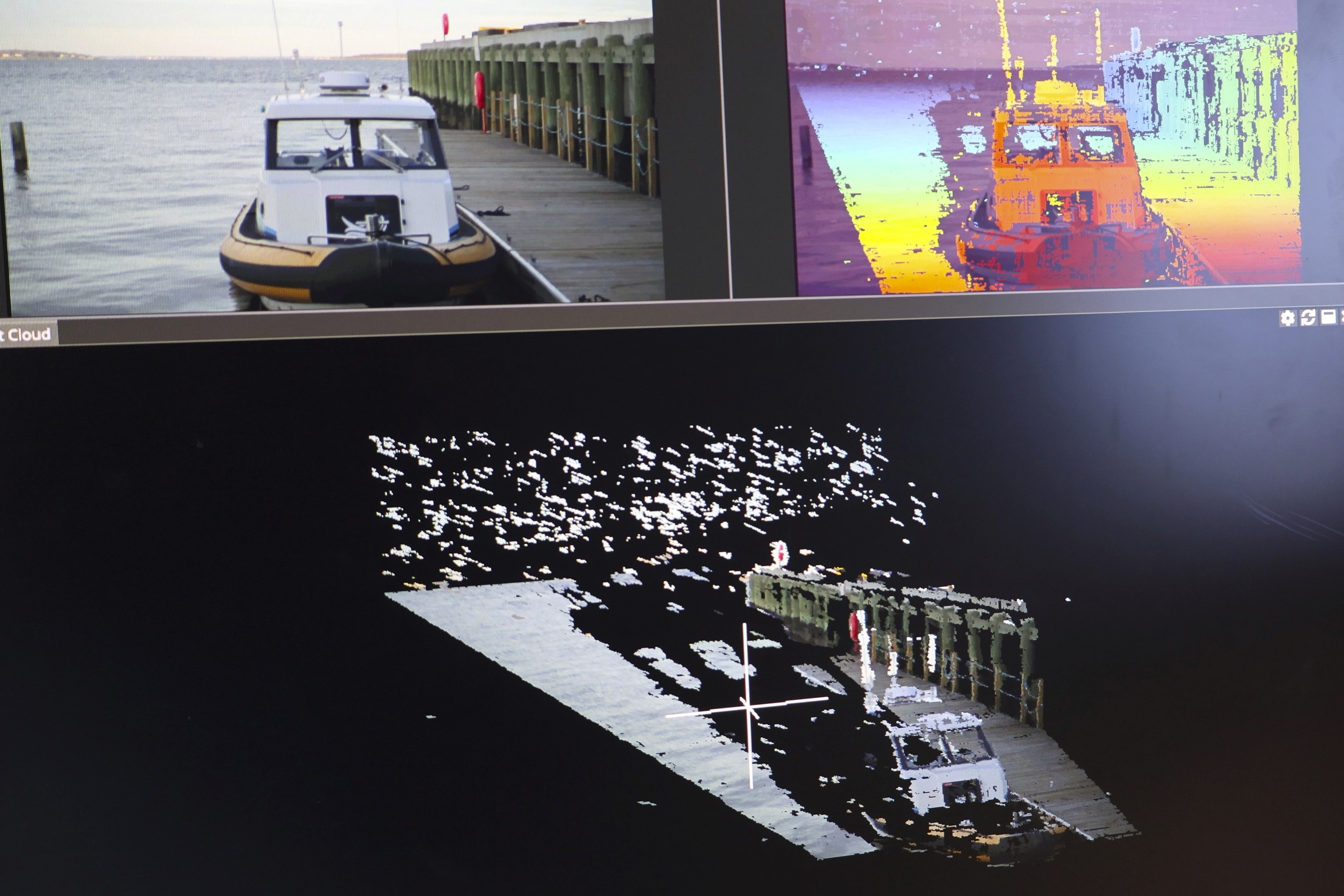

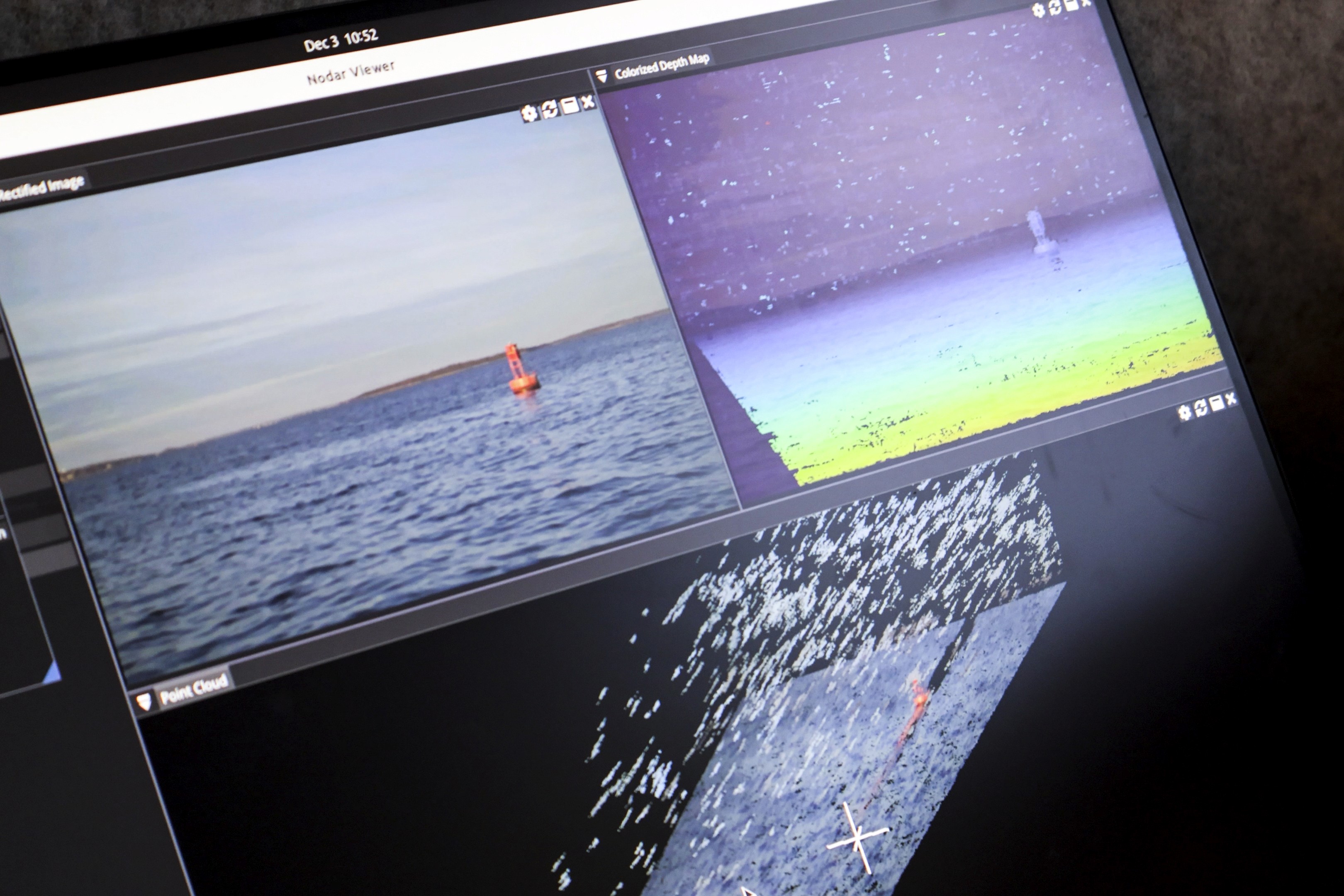

Check out the real-time output from NODAR Hammerhead as displayed in NODAR Viewer. Each frame represents the data available during operation:

Upper left: Left rectified image

Upper center: Depth map, alpha-blended with intensity imagery

Right: GridDetect occupancy map (0–400/500 m range, 20 cm grid resolution)

Bottom: Colorized 3D point cloud

Observe how distance, free space, and hazards are reconstructed continuously at long range.

Dynamic

Starboard to Port

Port to Starboard

Achieve these results with the NODAR HDK or SDK

Technical Specifications

Stereo Geometry

Minimum range: 26.4 m

Maximum range (<2% error range): 5.4 km (good weather), 1.8 km (bad weather)

Resolution: 2880×1860

FOV: 30.5° × 20.0°

Baseline: 5.1 m

Focal length: 5289 px

Angular resolution: 0.0108°/px

Lateral resolution: 1.9 cm @ 100 m

Operating Range

Minimum range: 26.4 m

Maximum range (<2% error range): 5.4 km (good weather), 1.8 km (bad weather)

Reproducing This Deployment

This system can be implemented in two ways, depending on whether you are starting from scratch or integrating into an existing platform.

Path 1 — Turn-Key System (HDK)

Use this path if you want the fastest time-to-first-results with minimal hardware setup.

Purchase the NODAR HDK: https://www.nodarsensor.com/order

HDK documentation: https://docs.nodarsensor.net/hdk/index.html

HDK Datasheet: https://nodarsensor.notion.site/hdk-datasheet-v2-0-1

The HDK provides a pre-validated stereo sensing platform including SDK, synchronized cameras, enclosure, onboard compute, networking, and timing configuration.

Bring-Up Steps

1. Mount the Unit

Mount the HDK rigidly facing the operating scene.

Guidelines:

• Unobstructed overlapping field of view

• Stable mechanical attachment

Check the Mounting Information on the datasheet.

2. Quick Start

HDK Quick Start: https://docs.nodarsensor.net/hdk/quick_start/

3. Start Hammerhead

Follow the quickstart procedure to launch Hammerhead.

4. Start Nodar Viewer

Follow the quickstart procedure to launch Nodar Viewer.

Expected result:

• Rectified stereo images

• Color blended depth map

• 3D point cloud

• Occupancy map

The system is now operational.

5. Integrate Outputs (Optional)

ZMQ interface (recommended): https://docs.nodarsensor.net/zmq/overview/

ROS2 interface: https://docs.nodarsensor.net/ros2/overview/

Path 2 — Integrate Into Existing Hardware (SDK)

Use this path if you already have stereo cameras and compute hardware.

Download the NODAR SDK - Make sure you choose the Hammerhead with Grid Detect option: https://buy.nodarsensor.net/

SDK Documentation - https://docs.nodarsensor.net/

Bring-Up Steps

1. Confirm Platform Compatibility

Verify supported operating system and GPU requirements.

Documentation: https://docs.nodarsensor.net/sdk/index.html#supported-systems

2. Install Software

Install Hammerhead and Viewer: https://docs.nodarsensor.net/sdk/index.html#getting-started

After installation verify:

3. Test Using Sample Data

Run the example pipeline before connecting real cameras.

This confirms the runtime environment is correct.

Expected:

• Viewer connects

• Depth map visible

• Point cloud visible

4. Connect Cameras

Lucid camera integration: https://docs.nodarsensor.net/sdk/index.html#lucid-cameras

Custom cameras via network publisher: https://docs.nodarsensor.net/sdk/index.html#topbot-publisher

5. Provide Calibration Files

Required files:

• intrinsics.ini

• extrinsics.ini

• master_config.ini

Download sample configuration files and change them according to the cameras you have:

https://docs.nodarsensor.net/config/extrinsics.html

https://docs.nodarsensor.net/config/intrinsics.html

https://docs.nodarsensor.net/config/master_config.html

Check the following parameters in master_config which has to be tuned according to your environmental setting.

The box filter has to be enabled to suppress sky-related artifacts and blinking points above the horizon, which is important for cleaning up the point cloud before obstacle detection.

After box filtering, GridDetect has to be enabled to generate obstacle detections and occupancy maps from the filtered point cloud.

6. Start the Runtime

Start runtime:

Open viewer:

Expected result:

Rectified stereo images

Color blended depth map

3D point cloud

Occupancy map

7. Integrate Outputs (Optional)

ZMQ interface (recommended): https://docs.nodarsensor.net/zmq/overview/

ROS2 interface: https://docs.nodarsensor.net/ros2/overview/