At NODAR, we've always believed that a perception system only proves its worth when conditions get hard. Bright sun and dry roads don't separate the best from the rest. Rain, fog, darkness, and glare do. That's why we put our wide-baseline stereo technology through one of the most rigorous adverse-weather evaluations we've conducted to date, partnering with a leading automotive safety research institute to test across 84 distinct scenarios.

The results confirmed what we suspected: NODAR doesn't just hold up in bad conditions. It outperforms the sensors that have long been considered the benchmark.

The Test

Our stereo system features a pair of 5.4MP Sony IMX490 cameras on an 89.5 cm baseline with a 30-degree field of view. This setup was evaluated against a standard set of real-world obstacles: a car, traffic signs, a picket fence, traffic cones, and a bicycle, at distances ranging from 4m to 50m.

Conditions were deliberately punishing:

Dry conditions at full brightness, in darkness, and with blinding glare

Heavy rain at 32 mm/h

Torrential rain at 96 mm/h

Dense fog with just 50m of visibility

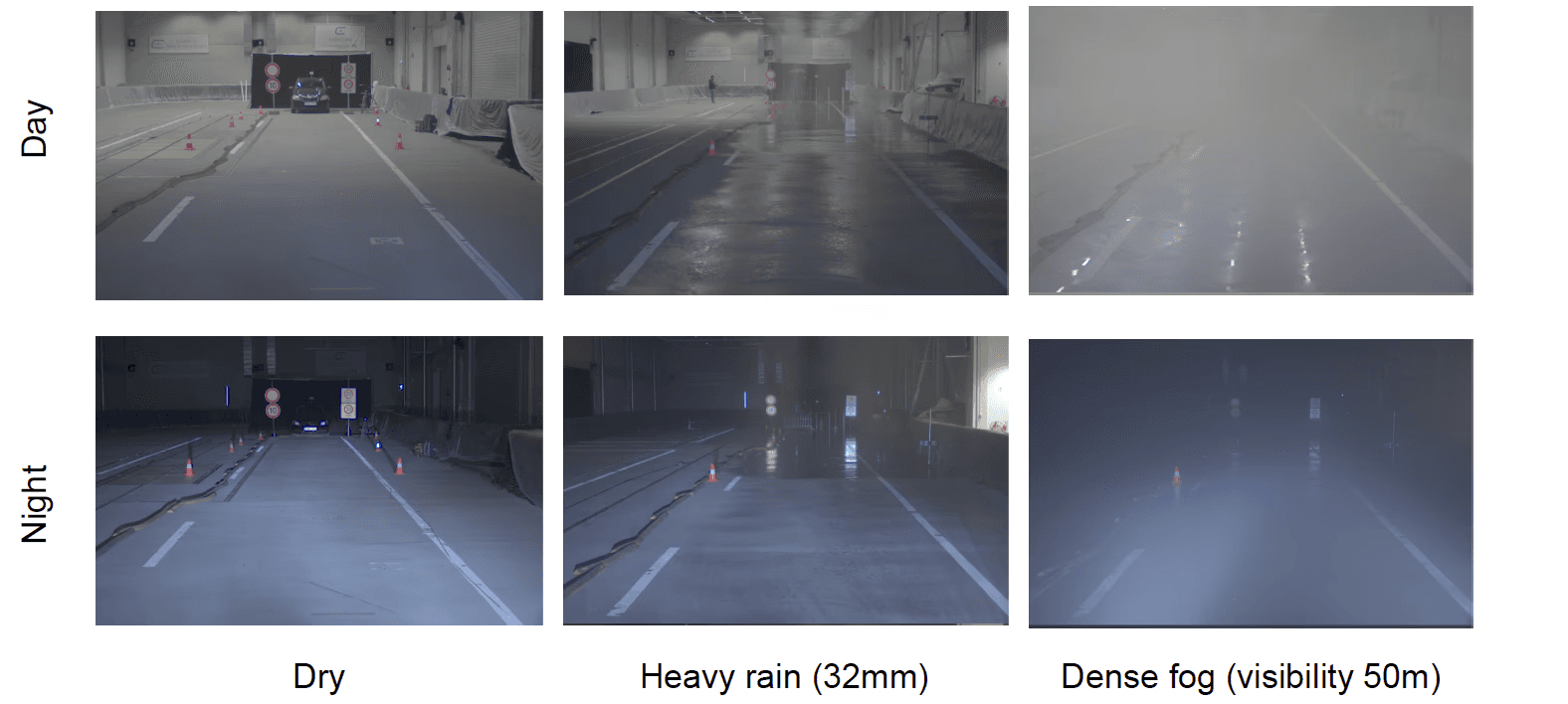

Each scenario was run during the day and at night, and results were compared directly against automotive-grade LiDAR.

Camera views across all tested conditions.

What We Found

In dry conditions, NODAR detected between 100k and 1.5 million points per frame on-target, with consistent performance whether the scene was fully lit, completely dark, or hit with a direct blinding glare source. LiDAR produced a sparse, low-density output by comparison. NODAR's high-density point cloud meant every obstacle was easily recognizable, with accurate and dense road reconstruction across all lighting configurations. Glare, which can saturate other sensors, had no meaningful impact on performance.

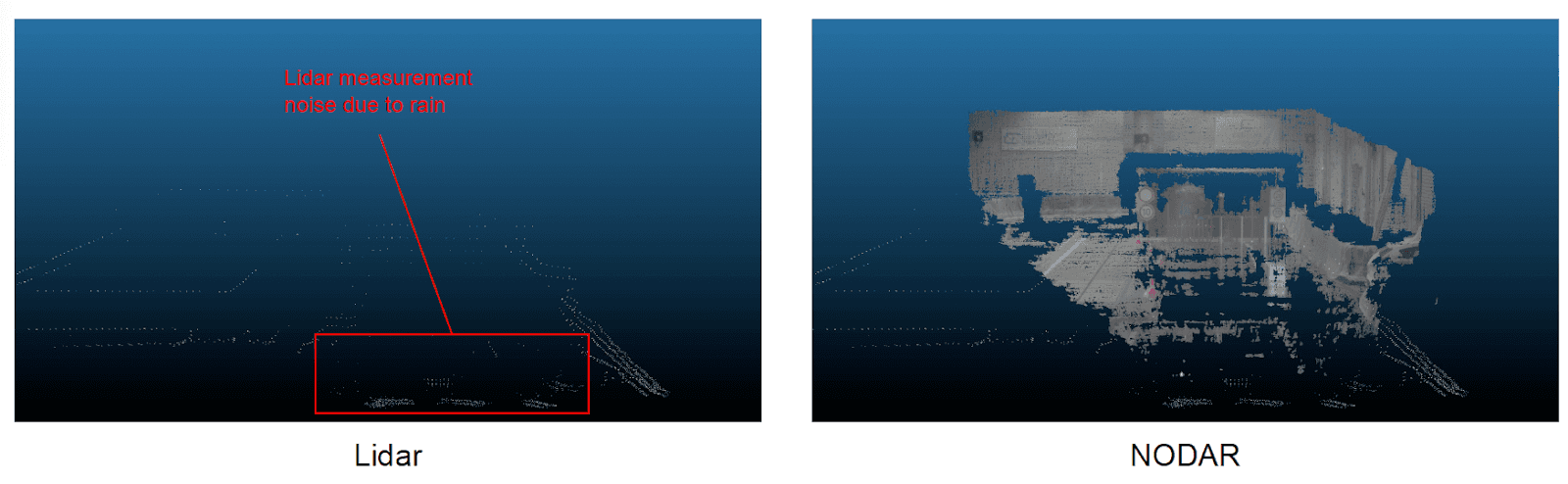

In heavy rain, LiDAR struggled with measurement noise as water droplets scatter the laser pulses. NODAR continued generating high-density point clouds with approximately 300k–400k detected points in the target region. Low-confidence measurements were filtered out automatically, and all obstacles remained clearly visible at every distance of interest.

Heavy rain (32mm/h): LiDAR point cloud with rain-induced noise (left) vs. NODAR's clean, high-density output (right).

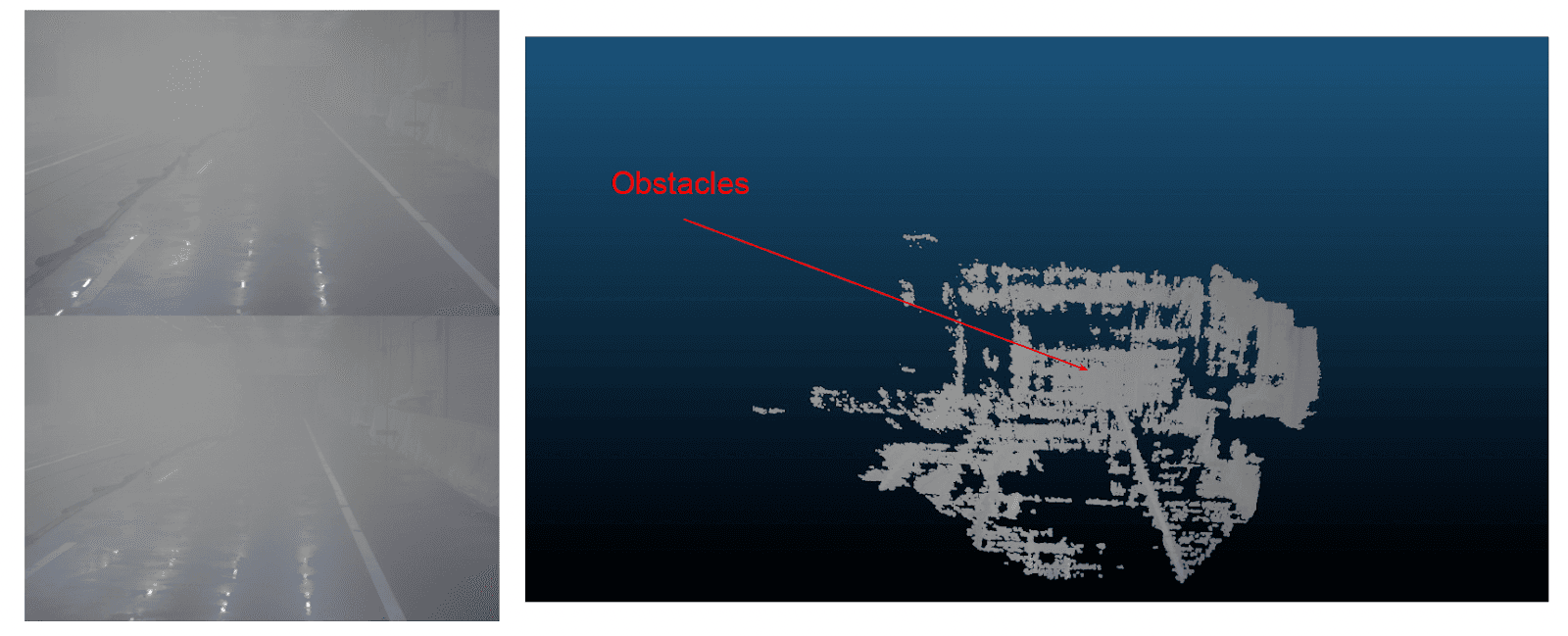

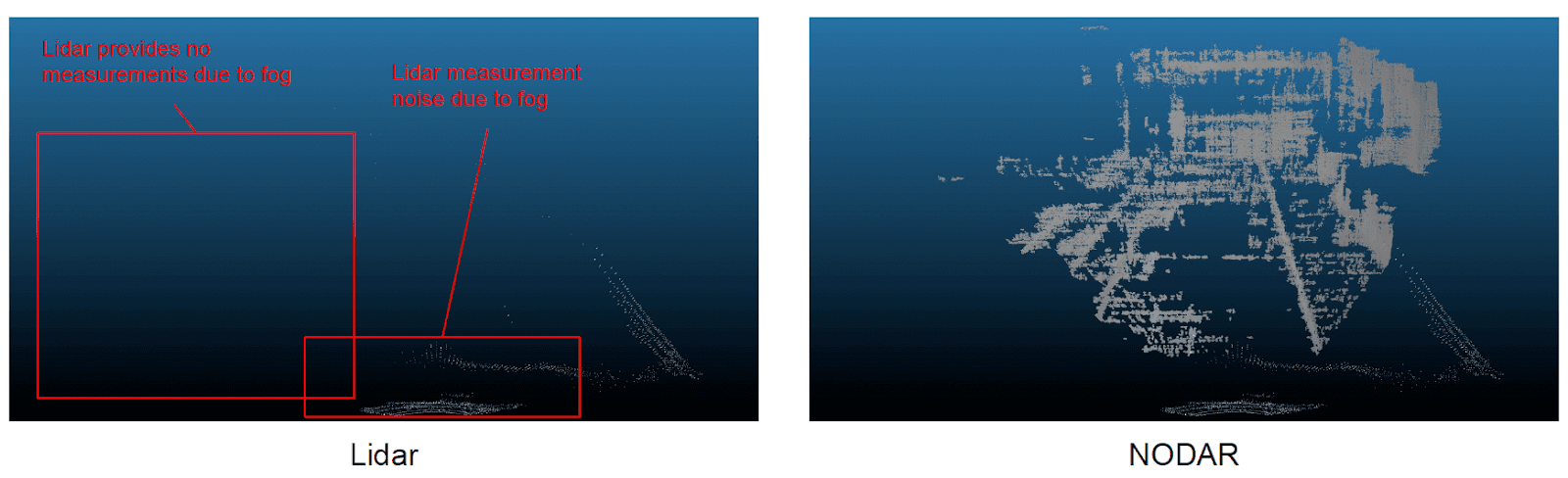

In dense fog, the gap became most striking. At 50m visibility, LiDAR provided virtually no useful measurements. The fog scattered the laser returns entirely, leaving near-empty point clouds. NODAR, processing passive stereo imagery, produced a dense and accurate 3D reconstruction of the scene. The most dramatic finding of the evaluation: NODAR detected obstacles that were completely invisible to the human eye. When a sensor surpasses human vision, it stops being a camera substitute and becomes something fundamentally different.

Dense fog (50m visibility): the camera sees only white. NODAR's point cloud pinpoints obstacles clearly.

In dense fog, LiDAR returns virtually nothing. NODAR maintains a high-density, accurate point cloud.

Beyond Visible Light: LWIR Stereo

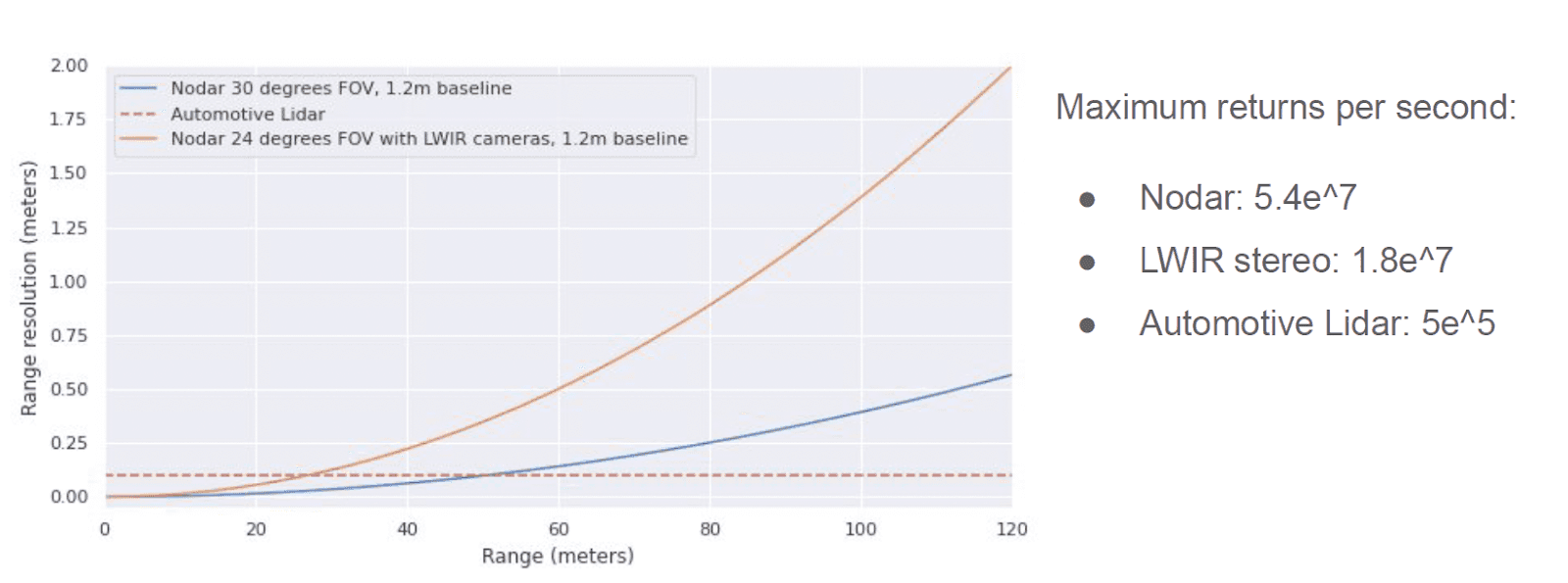

We also evaluated NODAR running on a pair of long-wave infrared (LWIR) thermal cameras, a configuration that adds excellent performance in dust and provides additional robustness at night and in adverse weather. LWIR stereo delivered strong 3D reconstruction with NODAR's wide-baseline approach, confirming that our software stack is camera-agnostic and can be paired with the right sensor modality for each application.

Why This Matters

The industry has a quiet assumption baked into its sensor roadmaps: LiDAR is the reliable baseline, and cameras are supplementary. Our data challenges that assumption directly. In fog, a condition that represents real operational risk for any autonomous system, LiDAR stops working. NODAR doesn't.

Wide-baseline stereo with NODAR's processing pipeline delivers up to 54 million depth returns per second, roughly 100x more than an automotive LiDAR, while maintaining the ability to operate in the conditions that matter most. That combination of density, accuracy, and all-weather robustness is what next-generation autonomous systems are going to need.

For more details about these tests, check out the IEEE Spectrum article.

NODAR delivers up to 100x more depth returns per second than automotive-grade LiDAR.

See It for Yourself

Autonomy that only works in good weather isn't autonomy. The scenarios that matter most — the ones where human operators also struggle — are exactly where your perception system has to hold. Our evaluation shows NODAR holds. If you're working on an autonomous vehicle, robotics, or ADAS program and want to understand how NODAR performs in your specific operational conditions, we'd love to show you.