Real-time 3D vision for critical airspace

NODAR's FlightView brings automotive-proven 3D sensing to aviation, delivering real-time obstacle detection at ranges up to 1,000 m and generating a complete depth map of the flight environment 10 times per second — in any lighting condition, without manual calibration.

1000m

Detection Range

Detects buildings, trees, and other aircraft at ranges up to 1,000 m

10fps

Real Time Processing

Generates a new complete 3D obstacle map 10 times per second.

50million

Depth measurements per second

Dense, per-pixel 3D sensing with no interpolation gaps

FlightView Benefits

Long-range hazard detection

Detects obstacles at ranges up to 1,000 meters, providing pilots with the reaction time needed to maneuver safely at operational speeds.

Continuous 3D hazard awareness

Provides real-time, high-resolution 3D mapping of the flight environment, enabling both immediate collision warnings and longer-term situational awareness for the pilot.

Reliable in extreme conditions

Operates in high-speed, high-vibration airframe environments with continuous autocalibration that maintains accuracy without manual intervention.

Dense 3D output

Computes depth for every pixel in the image, producing a complete 3D reconstruction of the flight environment with no interpolation gaps — enabling detection of thin hazards such as power lines at ranges up to 250 m.

Environmental resilience

120+ dB HDR sensors maintain reliable imaging in direct sunlight, low-light conditions, and dawn/dusk transitions — without degrading stereo matching quality.

Vibration-resistant calibration

Patented per-frame autocalibration continuously corrects stereo alignment under engine vibration and airframe flex, eliminating the need for manual recalibration during operation.

Warnings When It Matters

FlightView overlays detected obstacles on a cockpit display with real-time distance labels, evaluates each against the aircraft's trajectory to compute time-to-collision, and triggers an audible warning automatically when a threshold is exceeded.

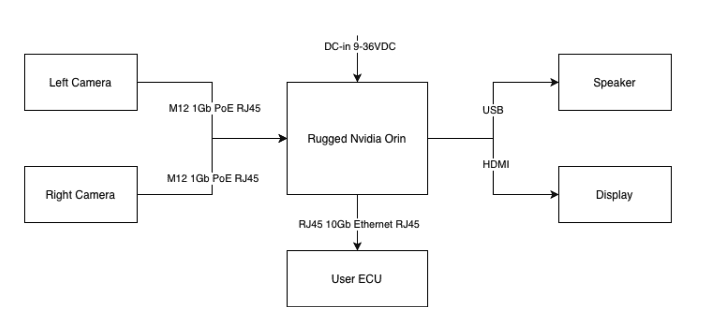

System Architecture

FlightView is built on the NODAR Hammerhead Reference Design (NDR-HDK-2.0-100-10-A), a production-ready hardware and software platform for ultra-wide-baseline stereo vision. The system ships fully assembled and produces high-resolution 3D point clouds out of the box, requiring only a power source and optional display to operate. The cameras can be removed from the enclosure and mounted independently, for example to utilize a longer stereo baseline.

Core System. HDK (NDR-HDK-2.0-100-10-A)

Compute module. Ruggedized NVIDIA Jetson Orin AGX 64 GB. Runs the complete Hammerhead software stack: stereo rectification, autocalibration, stereo matching, and GridDetect obstacle detection. Ubuntu Linux with CUDA acceleration. Integration APIs: C++, Python, and ROS2.

Sensor module. Ultrawide-baseline stereo vision camera pair. 5.4 MP Sony IMX490 sensors, 120+ dB HDR. 1 m baseline bar with cameras removable for custom baselines tested up to 5 m.

Optics. 50 mm lens pair recommended for extended-range detection. Optional dual-lens configuration: 16 mm pair for wide-area situational awareness and 50 mm pair for long-range detection.

Interfaces

(Add-on) Audio alert system. USB audio output to cockpit speaker for audible collision warnings.

(Add-on) Display module. HDMI interface for visual overlay output.

Integration Hardware

Cables and interfacing equipment. Ethernet cabling for camera-to-compute connectivity.

(Custom) Mounting hardware. 1 m baseline bar with ¼-20" tripod holes included. Cameras can be independently mounted at wider baselines for custom airframe installations.

Software Processing Pipeline

Hammerhead processes every frame through a complete four-stage pipeline, turning raw stereo imagery into real-time collision warnings without interruption.

Autocalibration. Patented algorithms correct stereo camera alignment on every frame, compensating for vibration, temperature shifts, and mechanical drift. The system tolerates angular changes of up to 0.1° per frame, eliminating the need for manual recalibration during operation.

Stereo matching. A deterministic signal-processing stereo matching algorithm computes dense disparity maps at up to 50 million depth measurements per second. The algorithm computes true per-pixel depth based on stereoscopic parallax rather than interpolating from sparse features or known objects.

GridDetect obstacle detection. A GPU-accelerated deterministic particle filter converts the dense 3D point cloud into an occupancy grid representation, providing real-time identification of free space and obstacles.

Alert generation. FlightView adds an alert logic layer on top of GridDetect that evaluates detected obstacles against the aircraft's trajectory and speed, computes time-to-collision, and triggers audible cockpit warnings when thresholds are exceeded.

Each frame independently generates a complete obstacle map, and detections are tracked across consecutive frames to filter transient noise and confirm persistent obstacles before triggering an alert. At 10 fps and an operational speed of 120 mph (53.6 m/s), the system produces a new 3D depth measurement approximately every 5.4 m of aircraft travel.

FlightView Resources

FlightView Technical Specification

Full hardware and software specs for the NODAR FlightView collision warning system, including detection performance, environmental ratings, and integration interfaces.

FlightView Solution Brief

An overview of NODAR FlightView — long-range 3D obstacle detection for aviation — covering key capabilities, system architecture, and deployment options.

Technical Specifications

Hardware

parameter

Specification

Camera sensor

Sony IMX490, 5.4 MP (2880 × 1860)

Sensor type

Rolling shutter, 120+ dB HDR

Pixel pitch

3.0 µm

Sensor size

8.64 mm × 5.58 mm

Lens options

7 mm / f/1.6 (65° HFoV), 16 mm / f/1.6 (30° HFoV), 50 mm / f/1.6 (10° HFoV)

Compute unit

NVIDIA Jetson Orin AGX, 64 GB

Frame rate

10 fps (FlightView configuration on Jetson Orin AGX)

Connectivity

Ethernet (camera to ECU)

Mounting

1 m baseline bar with tripod holes (¼-20"); cameras removable for custom baselines

Operating temperature

−25°C to +55°C (Jetson Orin AGX rated range)

Power input

9-36 VDC (Orin AGX)

Power consumption

75 W (max) / 6 W (idle)

Weight

Compute unit 4.2 kg; each camera head 67 g; reference design enclosure with cameras 3.4 kg

¹ Higher framerate dependent on configuration https://docs.nodarsensor.net/benchmarks.html

Hammerhead Performance

parameter

Specification

Autocalibration

Continuous, every frame

Initial camera alignment tolerance

±3° for the initial mounting

Vibration tolerance

up to 0.1° per frame

Stereo matching algorithm

Deterministic signal processing (not learned/neural)

Max throughput

50 million depth pixels/second

Depth precision

0.05%-0.4% at 100 m (depending on baseline and FOV)

Maximum tested range

1,000 m (buildings, trees, aircraft, vehicles)

Baseline support

Tested up to 5 m

GridDetect throughput

100 million 3D points/second

Software platform

Ubuntu 20.04/22.04/24.04, CUDA 11.4–13.0

Integration APIs

C++, Python, ROS2

Output interfaces

Ethernet (API); HDMI (visual overlay); USB audio (speaker)

Stereo Geometry - Detection Range by Lens Configuration

This table shows key stereo parameters for the two lens options optimized for deployment on a small aircraft, with cameras at a 2.5 m baseline with the IMX490 sensor.

parameter

16 mm (wide)

50 mm (tele)

Horizontal field of view

30.2°

9.9°

Vertical field of view

19.8°

6.4°

Coverage at 500 m

270 m × 173 m

86 m × 56 m

Disparity at 500 m

27 px

83 px

Disparity at 1000 m

13 px

42 px

Depth resolution at 500 m (0.25 px sub-pixel)

4.7 m

1.5 m

Depth resolution at 1000 m (0.25 px sub-pixel)

18.8 m

6.0 m

GSD at 500 m

94 mm/px

30 mm/px

Min. detectable object at 500 m (~3 px)

0.28 m

0.09 m

Environmental Conditions

parameter

Specification

Standard / source

Operating temperature

Aetina AIE-PX23 datasheet; Sony IMX490 AEC-Q100

Storage temperature

−40°C to +85°C

Aetina AIE-PX23 datasheet

Humidity

95% @ 40°C, non-condensing

Aetina AIE-PX23 datasheet

Vibration

1 Grms, random, 5–500 Hz, 1 hr/axis

IEC 60068-2-64

Shock

10 G, half sine, 11 ms

IEC 60068-2-27

Certifications

CE / FCC Class A / UKCA

Aetina AIE-PX23

IP rating

Lucid Triton TDR054S-CC datasheet